The Seven Mandatory Requirements for High-Risk AI Systems

A technical breakdown

Many organizations that believe they understand their EU AI Act obligations for high-risk AI systems have not read Articles 9 through 17 carefully enough to discover how far they are from satisfying them.

The assumption is quite common : a policy document exists, a risk assessment was conducted, the AI system is in deployment. Compliance is presumed. But the EU AI Act does not require compliance at the level of policy language : it requires compliance at the level of evidence architecture, documentation discipline, and operational process. A policy document is not evidence. A risk assessment conducted once at design time is not an iterative risk management system. A deployment that no one monitors post-market is not post-market monitoring.

This is the third article in our ten-part series on AI governance. If you have not yet read Why the EU AI Act Applies to You, that article provides the necessary context for understanding who is in scope and which role obligations apply. This article focuses on what high-risk system providers must actually demonstrate to be compliant; and why the gap between what organizations have done and what the regulation requires is, in many cases, very large.

The Integration Problem

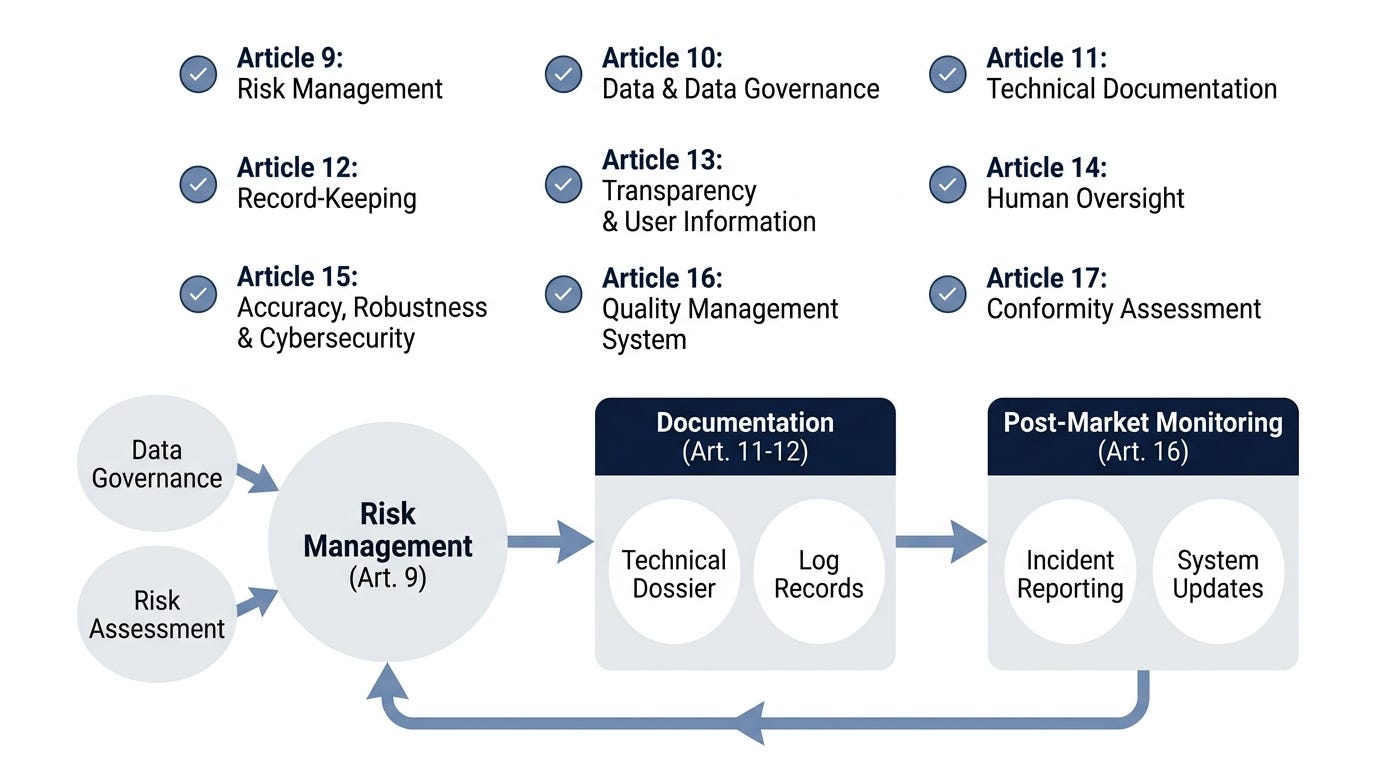

Articles 9 through 17 are commonly discussed as seven separate requirements. This framing is misleading. They form an integrated system : a gap in one creates a compliance gap in others, because the evidence for each requirement connects to the evidence for the others.

A risk management system (Article 9) that does not produce documented outputs cannot support a post-market monitoring procedure (Article 17) that requires updating risk records when new risks emerge. A technical documentation system (Article 11) that is not updated when the model changes means the conformity declaration that references that documentation is inaccurate. A QMS (Article 16) that does not have a change management process means every system update creates a documentation compliance violation.

The requirement integration is not optional architecture; it is the compliance architecture. Organizations that address each article as a standalone checklist have built compliance scaffolding that collapses under regulatory scrutiny.

Article 9 - Risk Management System

The risk management system is the foundation of the entire compliance structure. Article 9 requires providers to establish, document, and maintain a risk management system that operates throughout the lifecycle of the high-risk AI system.

What “iterative” means in practice : The most significant word in Article 9 is “iterative.” A static risk assessment conducted at design time does not satisfy the requirement. The risk management process must continue post-deployment as new risks emerge, as the deployment context changes, and as the system evolves. A risk record opened during design must show evidence of review and update at deployment and at post-market phases. Residual risks (those that remain after mitigation measures are applied) must be formally accepted and documented.

In practice, this means many organizations without an existing quality management system have no documented risk management process for AI systems. Opening a risk record and keeping it updated through the system lifecycle requires process infrastructure that typically has a six-to-twelve month lead time to implement properly.

What must be documented :

Risk identification methodology

Risk evaluation criteria and acceptance thresholds

Mitigation measures applied and their effectiveness

Residual risk decisions and who approved them

Risk record updates triggered by system changes or post-market data

Article 10 - Data Governance

Article 10 requires that training, validation, and testing data meet four criteria : relevance, representativeness, error-free, and completeness. Appropriate data governance mechanisms must be established.

The bias examination requirement is explicit and non-discretionary. The Act requires providers to examine whether training data may introduce bias : this is not a discretionary best practice. Providers must document the bias examination methodology, findings, and any mitigation measures applied. A data governance process that does not produce this documentation does not satisfy Article 10.

Special category data (Article 10(3)) : When training data contains special category data under GDPR Article 9 (health data, biometric data, genetic data, race or ethnicity, political opinions, religious belief, sexual orientation) providers must implement additional safeguards. This creates a GDPR-EU AI Act intersection that requires joint legal review. The two regulations interact at this point and organizations that treat them as separate compliance tracks frequently discover gaps in their overlapping obligations.

Article 11 - Technical Documentation

Technical documentation must be drawn up before the system is placed on the market and kept up-to-date. Article 11 specifies this obligation; Annex IV defines what the documentation must contain.

The technical documentation must include, at minimum :

System description and intended purpose

System architecture : design decisions, algorithms, training approaches

Training methodology and training data characteristics

Testing procedures and results

Known limitations and acceptable risks

Cybersecurity measures

Post-market monitoring plan

The documentation update obligation is continuous

Many organizations treat technical documentation as a one-time deliverable at launch. The Act requires documentation to be “kept up-to-date”; meaning every significant system change must be reflected in the documentation. This requires a documentation change management process that is itself part of the QMS (Article 16).

Evidence for conformity assessment

Technical documentation is the primary evidence used by conformity assessment bodies to verify compliance. Poor documentation creates a presumption of non-compliance. The documentation is not a formality, it is the compliance record.

Article 12 - Automatic Logging

High-risk AI systems must be designed and developed to enable automatic logging of events during the system’s lifecycle. Logs must be retained for a minimum of six months.

What must be logged : Events during operation that are relevant for monitoring system behavior, detecting potential issues, investigating incidents, and maintaining compliance with other requirements. The scope of “relevant” is defined by the other requirements : what you need to investigate a serious incident (Article 72), demonstrate human oversight was maintained (Article 14), and verify system performance against documented metrics (Article 17) determines what your logging system must capture.

Six-month minimum retention is a floor, not a ceiling. The clock starts from the moment of the event, not from system deployment. In practice, organizations with related legal holds (litigation, regulatory investigations, product liability) should extend retention accordingly. The six-month floor exists because regulators want to be able to examine recent events. If you have active matters that require longer retention, the longer period governs.

Log integrity : Deployers are responsible for log integrity. Logs must be tamper-evident and retained in a manner that preserves their evidentiary value. A logging system that can be modified after the fact without leaving a record does not satisfy the requirement.

Article 13 - Transparency to Users

High-risk AI systems must be designed and developed to enable deployers to understand the system’s output. Instructions for use must include the intended purpose, accuracy metrics and limitations, human oversight measures, and maintenance requirements.

Three key technical implications :

Output interpretability : The system must produce outputs that the deployer can understand. This does not require explainability of model internals : it requires that the system’s behavior is comprehensible in the context of use. A credit scoring system that produces a score without explaining the factors that drove it has a transparency problem even if the underlying model is sound.

Known limitations disclosure : Providers must document and communicate known accuracy limitations. This is distinct from a general obligation to disclose all possible limitations. “Known” means limitations discovered during development and testing : the ones the provider is aware of at the time of deployment.

Intended purpose as a technical boundary : The instructions for use must clearly define the intended purpose. If a deployer uses the system outside that intended purpose, the provider’s conformity declaration does not cover that use. This creates a documentation incentive to be precise about intended purpose, and a deployer incentive to respect the documented boundary.

Article 14 - Human Oversight

High-risk AI systems must be designed and developed to allow natural persons to effectively oversee, monitor, and intervene; including the ability to decide not to use or to stop the system.

The oversight requirement has three tiers, all of which must be satisfied :

Ability to understand : The human overseer must be able to understand what the system is doing and why. This implies output explanations or decision context that allows meaningful human review.

Ability to intervene : The human overseer must have the technical means to override, stop, or redirect the system. A human who can see what the system is doing but cannot change its behavior has not fulfilled Article 14.

Authority to decide not to use : The human overseer must have the organizational authority to refuse a system’s recommendation or output. Technical capability without organizational authority does not constitute effective oversight.

For most high-risk AI systems, human oversight must be achieved through one or more of :

Output review before action (human-in-the-loop)

Override mechanisms with clear escalation paths

Real-time monitoring dashboards with alert thresholds

Kill switch or emergency stop functionality

Decision documentation and audit trail

The “effective” standard is the critical test : Oversight that exists on paper but is practically inaccessible does not satisfy the requirement. A human overseer who cannot realistically review system outputs before they take effect has not fulfilled Article 14. If your oversight mechanism requires someone to be physically present at a screen at the moment a consequential decision is made, and that person is also doing twelve other things, your oversight is not effective.

Articles 15, 16, and 17 - The Continuous Compliance Loop

These three articles form a continuous loop : the system must perform accurately and robustly (Article 15), the organization must manage quality systematically (Article 16), and the organization must monitor what happens after deployment and respond when the risk picture changes (Article 17).

Article 15 - Accuracy, Robustness, and Cybersecurity

High-risk AI systems must achieve appropriate levels of accuracy, robustness, and cybersecurity, and perform consistently in relation to their intended purpose over their lifecycle.

The three dimensions :

AI-specific cybersecurity threats include data poisoning (corrupting training data to alter model behavior), model evasion (adversarial inputs that cause misclassification), model inversion (extracting training data from model outputs), membership inference (determining whether specific data was in the training set), and for agentic systems : prompt injection (external content that alters system behavior). Article 15 requires protection against these threat classes; not just generic cybersecurity.

Article 16 - Quality Management System

Providers of high-risk AI systems must implement a quality management system (QMS) that ensures and demonstrates compliance with the regulation.

The QMS maps to ISO/IEC 42001:2023. Organizations with ISO 42001 certification are presumed to satisfy Article 16. This is the primary regulatory bridge between the EU AI Act and the broader ISO governance ecosystem : if you already have ISO 42001 certification, you have the structural foundation for Article 16 compliance.

Minimum QMS components :

Document control for technical documentation

Change management process (for model updates, deployment changes)

Risk management documentation process

Post-market monitoring procedure

Non-conformity and incident response process

Internal audit program

Management review process

The QMS is the system that ties the other requirements together. Organizations without a QMS that attempt to comply with Articles 9–15 individually typically build parallel compliance tracks that are expensive to maintain and difficult to audit. The QMS is not an additional requirement on top of the other requirements : it is the structural framework that makes the other requirements sustainable.

Article 17 — Post-Market Monitoring

Providers must establish and maintain a post-market monitoring system to collect and document data about the AI system’s performance and risks throughout its lifecycle.

The active monitoring requirement is non-negotiable. A passive post-market monitoring system (one that collects complaints and waits for problems to be reported) does not satisfy Article 17. Active monitoring means proactively measuring system performance against defined KPIs, instrumenting the system to emit performance data, and updating risk records when new risks are identified.

The Article 72 intersection : Article 72 requires serious incident reporting to national market surveillance authorities. Your post-market monitoring system must have a defined escalation path that triggers Article 72 reporting when incidents meet the serious incident threshold. If your post-market monitoring system does not have a clear definition of what constitutes a serious incident and a documented path to regulatory reporting, it does not satisfy either Article 17 or Article 72.

The Compliance Architecture Is the Point

The integration of these requirements is not an academic observation : it is the practical reality of EU AI Act compliance. An organization that has addressed each article separately has not built a compliant system. An organization that has built the integrated compliance architecture ( where risk management feeds documentation, documentation feeds conformity assessment, post-market monitoring feeds risk management, and the QMS holds the whole structure together) has something that regulators can audit and that provides real protection.

The enforcement authorities for high-risk system obligations become fully operational on 2 August 2026. The window to build this architecture properly (with evidence that can be retrieved, organized, and presented) is narrow. Organizations that are treating this as a documentation exercise will discover that documentation without process infrastructure does not survive regulatory scrutiny.

Next: GPAI Models and the 10²³ FLOP Threshold — What Every Platform Builder Must Know*

Download our free High-Risk Requirements Gap Analysis & Self-Assessment Templates for Providers and Deployers.