Local Models - A Guide to Running LLMs on Your Hardware

A landscape review of the best local language models in 2026 - from beefy 70B giants to pocket-sized 0.8B sidekicks

Not so long ago, running a halfway-decent language model locally meant praying to the GPU gods and sacrificing your afternoon to a loading spinner. Those days are gone. The local LLM ecosystem has evolved at a pace that would make even the most jaded tech optimist raise an eyebrow.

Today’s landscape offers genuine, production-ready models for nearly every machine configuration : from beefy workstations with 64GB+ of RAM down to laptops that wouldn’t dream of touching a gaming GPU. The question isn’t whether local AI is viable anymore. It’s which model actually belongs on your system.

This guide cuts through the noise. I’ve attempted to draw a clear picture of where each model shines, where it wobbles, and (most importantly) which task it was born to handle.

Let’s dive in.

Understanding the Stack - Quantization, VRAM, and Why It Matters

Before getting to the good stuff, a quick explainer for the uninitiated.

VRAM (Video RAM) is the memory on your graphics card. When running AI models locally, all the model weights and computations happen on the GPU, so you need enough VRAM to hold the entire model; think of it as RAM dedicated to your graphics card. More VRAM means you can run larger models or higher precision quantization.

When we talk about models in this guide, we’re almost exclusively discussing GGUF-quantized models : a format that squeezes large models into smaller file sizes with minimal quality loss. The quantization level (Q8_0, Q6_K, Q4_K_M, etc.) represents the precision at which the model’s weights are stored. Lower numbers mean smaller files and less VRAM required, but also some quality degradation.

For context: a Q8_0 model retains ~99% of quality but needs significantly more VRAM than a Q4_K_M model at ~95% quality. For most users, Q6_K or Q4_K_M hits the sweet spot between size and performance.

Also worth noting: context length (e.g. how many tokens the model can “see” at once) varies dramatically. Some models offer 128K tokens (enough for a short novel), while others push to 1M tokens (enough to ingest your entire code repository).

Let’s meet the players.

The 64GB Tier - Where Power Meets Patience

These are the models that make you reconsider whether you really needed that monitor upgrade. They demand serious hardware but deliver real flagship-level performance.

Qwen3.6-27B - The General Purpose Titan

If you want one model that does nearly everything at a high level (coding, reasoning, agent workflows, general chat) the Qwen3.6-27B is your workhorse. This dense model punches well above its weight class, achieving 77.2% on SWE-bench Verified (a coding benchmark) and 94.1% on AIME26 (math olympiad problems). For reference, that’s better than some models twice its size.

The secret sauce? A hybrid architecture combining Gated DeltaNet with Gated Attention, plus multimodality that handles images and video out of the box. The “Thinking Preservation” mechanism keeps multi-step reasoning coherent across iterations : a godsend for complex agent tasks.

At ~28.6GB in Q8_0, you’ll need a serious GPU. This isn’t a model for the faint-hearted or the RTX 3060 crowd.

Qwen3.6-35B-A3B - The Efficient Performer

Meet the MoE (Mixture of Experts) counterpart to the 27B. With only 3 billion parameters active per token out of 35B total, this model flies : 3-5× faster inference than the dense 27B while maintaining comparable quality.

The benchmark picture tells the story : 73.4% on SWE-bench Verified, 92.7% on AIME26, and 85.2% on MMLU-Pro. If you’re building coding agents or need fast iteration on complex tasks, this is the MoE you want.

The downslde is that Q6_K still needs ~25.6GB, so plan accordingly.

Llama 3.3 70B - The Safe Big-Model Choice

Meta’s 70B remains the reliable choice for workloads that need breadth. With 128K context and excellent multilingual support, it’s the workhorse for long-form writing, broad world knowledge, and situations where model reliability trumps raw benchmark chasing.

On IFEval (instruction following), Llama 3.3 70B actually outperforms models twice its size at 92.1%. It’s the model you reach for when you need to trust that your instructions will be followed without drama.

It won’t win any benchmark beauty pageants in 2026. But it will consistently deliver solid outputs, and sometimes that’s worth more than a flashy leaderboard position.

Gemma 4 31B - The Reasoning Champion

Google DeepMind’s Gemma 4 31B is the math and coding specialist that makes other models nervous. With 89.2% on AIME 2026 (math) and a Codeforces ELO of 2150, it’s the choice when your work involves analytical challenges.

The multimodal support is excellent (text, image, audio, video) and the native thinking mode lets you watch the model reason through problems step by step. For anyone doing complex technical work, this model’s thinking process is almost as valuable as its outputs.

At ~32.6GB in Q8_0, it’s a workstation model. But if your work involves heavy reasoning and coding, the investment pays off.

Kimi-Linear-48B-A3B - The Context King

When 1M token context lengths were a novelty, the Kimi-Linear-48B made them practical. Its hybrid linear attention architecture delivers 6× faster decoding at 1M tokens compared to traditional attention, with a 75% reduction in KV cache memory usage.

For research, massive document analysis, or whole-codebase Q&A, this is the model that makes “ingest everything” actually feasible on local hardware. Just don’t expect the highest raw benchmark scores : the architecture trades some MMLU-Pro performance for that incredible context efficiency.

The Specialists

Three more models deserve quick recognition:

- Nemotron Super 49B v1.5 : NVIDIA’s reasoning specialist, hybrid Mamba-Transformer architecture, optimized for agentic tasks. The 1M token context is real, not marketing.

- Qwen3-30B-A3B-Thinking-2507 : The thinking model for when you need visible, step-by-step reasoning on math and logic problems. 85% on AIME25, with a thinking mode that actually works.

- Qwen3-VL-32B : Vision-language specialist for OCR, document parsing, chart analysis, and multimodal agent workflows. If you need to understand images deeply, this is your model.

The 32GB Sweet Spot - High Performance, Realistic Hardware

This tier represents the realistic sweet spot for most power users : machines with 32GB VRAM that still want decent performance without the workstation upgrade.

Qwen3.5 27B — The People’s Champion

The Qwen3.5 27B is what happens when quantization maturity meets excellent architecture. At Q6_K_M (~25GB), it delivers 86.1% on MMLU-Pro and 95% on IFEval; numbers that would have turned heads two years ago at twice the size.

The 262K native context means you can actually use that context without jumping through YaRN hoops. Multimodal support handles images. Tool calling works reliably. For general-purpose work (writing, research, coding, agents) this model delivers great quality at a realistic footprint.

Gemma 4 31B - Premium Quality, Premium Price

The 31B dense model for when quality is non-negotiable and speed is nice-but-not-essential. At 89.2% AIME and 2150 Codeforces ELO, the benchmark case writes itself. The 256K context and multimodal support are just icing.

On the minus side, Q6_K needs ~25GB, and Q4 still wants 18GB. Plan your VRAM accordingly.

Qwen3.6-35B-A3B (UD-Q4_K_M) - The Efficient Performer

Remember this model from the 64GB tier? At Q4_K_M quantization (~20-21GB), it becomes a real 32GB option without meaningful quality loss. The MoE architecture means you get 35B parameters worth of quality at 3B active parameter speed.

For coding agents, tool use, and fast iteration; this is the model that lets your 32GB machine punch above its weight class.

DeepSeek-R1 Distill Qwen 32B - The Math Specialist

When your work is math-heavy, this distilled DeepSeek R1 model delivers exceptional results. 94.3% on MATH-500 and 72.6% on AIME 2024. Those numbers belong to models twice its size.

The tradeoff : code performance (57.2% on LiveCodeBench) lags behind the generalists, and the 128K context is notably shorter than competitors. But if you’re building a math-focused application, the R1 distillation is remarkably cost-effective.

Mistral Small 24B - The Agentic All-Rounder

Mistral’s 24B hits a different niche : tool-calling and agent workflows. With 84.8% on HumanEval and strong instruction following, it’s the model for building assistants that need to reliably call functions and execute multi-step workflows.

The 32K context is the main limitation. But for local business automation, chat interfaces, and function-calling heavy applications, Mistral Small delivers at a reasonable footprint (~19GB Q6_K).

The Supporting Cast

- Gemma 4 26B A4B : MoE efficiency with 4B active params out of 26B total. Lower absolute performance than the 31B dense, but excellent for its VRAM efficiency.

- Qwen3.5 9B : A remarkable compact performer at ~13GB. 82.5% MMLU-Pro makes it a legitimate daily driver for users who don’t need maximum quality.

- Llama 3.1 8B : The stable, mature option for users who value ecosystem over benchmarks. Still useful for RAG, document ingestion, and long prompts.

The 16GB Realism Tier - Doing More With Less

This is where local AI gets really democratized. 16GB VRAM (the domain of gaming GPUs and mobile workstations) can now run models that would have been science fiction a few years ago.

Qwen3.5 9B - The Daily Driver

At ~9GB in Q4_K_M, this is the model that fits on an RTX 3060 while delivering 82.5% MMLU-Pro and 91.5% IFEval. That’s not “good for a 9B model” : that’s just good, period.

For general chat, drafting, research, and daily tasks, the Qwen3.5 9B is the choice that makes local AI practical for anyone with consumer hardware.

DeepSeek-R1 Distill Qwen 7B - The Math Genius

The distilled R1 reasoning capabilities in a 4-5GB package.92.8% on MATH-500 and 55.5% on AIME. For math-focused applications, this is the budget choice that doesn’t compromise.

Just don’t ask it to code. At 37.6% on LiveCodeBench, the R1 distillation is a specialist, not a generalist.

Qwen2.5 Coder 7B - The Code Specialist

Speaking of specialists: if coding is the task, the Qwen2.5 Coder 7B delivers ~85% on HumanEval at ~4.7GB. For completions, refactors, debugging, and repo Q&A, this is the dedicated code model that beats generalists on code tasks.

On the minus side, general knowledge (40.1% MMLU-Pro) is not this model’s strength.

Phi-4 Mini Reasoning - The Compact Thinker

At 3.8B parameters and ~2.5GB, the Phi-4 Mini Reasoning punches far above its weight class on math. 94.6% on MATH-500 and 57.5% on AIME; those numbers are remarkable for a sub-4GB model.

English-only is the core limitation. But for math-heavy applications where you need reasoning in a tiny package, Phi-4 Mini Reasoning is a revelation.

Gemma 4 E4B - The Multimodal Lightweight

For tasks that need vision without the VRAM cost, Gemma 4 E4B delivers text + image + audio understanding at ~5-6GB. It’s the model for edge deployment, laptops without dedicated GPUs, and applications that need multimodal support without the flagship footprint.

Benchmarks are modest, but the capability-to-footprint ratio is exceptional.

The Micro Models

The bottom of the stack still has great utility:

- Phi-3.5 Mini : Strong code (86% Python) and 128K context in ~2.8GB. Older model, but still useful.

- Qwen3.5 2B : 262K context in 1.3GB. The tiny giant for long-context retrieval tasks.

- Qwen3.5 0.8B : 262K context in under 1GB. Classification, routing, triage; tasks that don’t need reasoning.

- Gemma 4 E2B-it: Multimodal in 4GB. Runs on smartphones. The edge AI frontier.

Use Case Recommendations

After reviewing the full landscape, here’s a practical guidance.

For 64GB+ Workstations :

- General purpose : Qwen3.6-27B, the do-anything flagship

- Speed + quality : Qwen3.6-35B-A3B, MoE efficiency, superior quality

- Math & reasoning : Gemma 4 31B, 89.2% AIME speaks for itself

- Longest context : Kimi-Linear-48B-A3B, 1M tokens, 6× faster

- Coding agents : Qwen3-Coder 30B-A3B, specialized for code work

For 32GB Machines :

- Best overall : Qwen3.5 27B, benchmark leader, excellent quality

- Value pick : Qwen3.6-35B-A3B Q4_K_M, MoE efficiency at realistic VRAM footprint

- Premium quality : Gemma 4 31B Q6_K, when quality trumps everything else

- Math focus : DeepSeek-R1 32B, the math specialist

- Tool calling : Mistral Small 24B, agentic workflows done right

For 16GB Machines :

- Best benchmarks : Qwen3.5 9B Q4, leaderboard-level scores at consumer GPU price

- Math + budget : DeepSeek-R1 7B, exceptional math, tiny footprint

- Coding specialist : Qwen2.5 Coder 7B, dedicated code model

- Compact reasoning : Phi-4 Mini Reasoning, 2.5GB of math magic

- Edge/mobile : Gemma 4 E2B, truly portable AI

Liquid Foundation Models - The Architecture That Thinks Differently

When every other model in this guide relies on Transformer derivatives (attention mechanisms, feed-forward layers, the usual suspects) Liquid Foundation Models take a fundamentally different approach. Built on Liquid Neural Networks (LNNs), these models are rooted in dynamical systems and signal processing rather than the attention-is-all-you-need paradigm. The result is a family of models that prioritizes real-time adaptation, millisecond latency, and genuine on-device deployment.

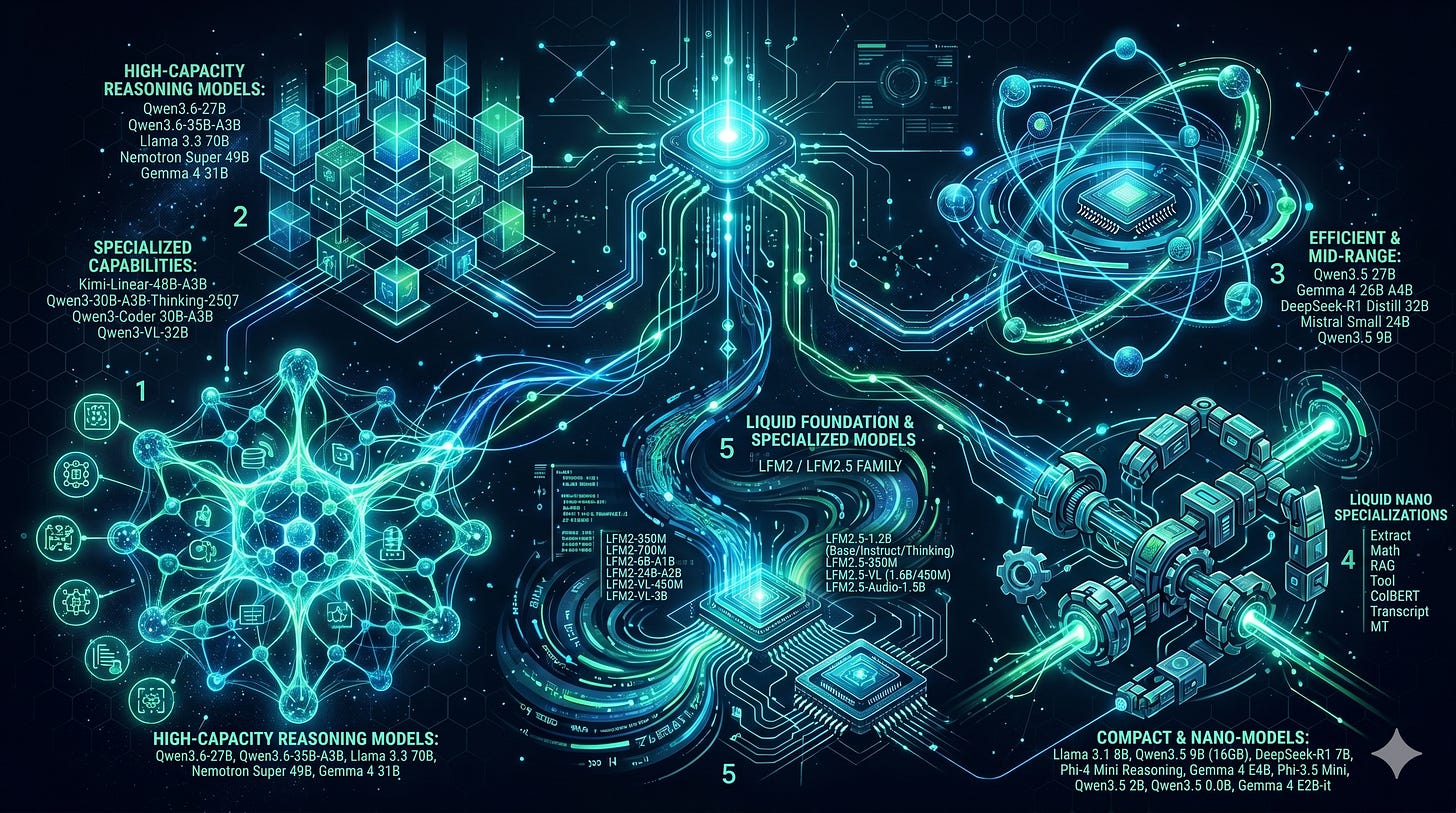

Liquid AI’s model lineup spans two main series: LFM2 and the newer LFM2.5, with variants ranging from 350M to 24B parameters. The philosophy is consistent across sizes: build models that run efficiently anywhere, from cloud servers to smartwatches, without sacrificing reliability.

The LFM2 Series - Production-Ready Foundations

The LFM2 series represents Liquid AI’s current production offering, designed for developers who need deploy-anywhere flexibility.

Text Models

- LFM2-350M : The lightest option in the family. CPU, NPU, and GPU execution make it genuinely device-agnostic : this model can run on hardware that wouldn’t dream of running a Llama variant. Benchmarks are modest (43.43% MMLU, 65.12% IFEval), but for simple classification, extraction, and routing tasks, it’s remarkably capable.

- LFM2-700M : The efficiency midpoint. Multilingual support is a standout feature — if you’re building applications that need to handle non-English text without cloud dependency, this model’s language handling is a genuine asset. 49.9% MMLU and 72.23% IFEval place it ahead of Qwen3.5 2B on most metrics while maintaining a similar footprint.

- LFM2-8B-A1B : The 8-billion parameter MoE variant with only 1B active parameters per token. This is Liquid AI’s answer to the Qwen3.5 9B question : comparable quality to dense 8B models at a fraction of the active compute. For on-device AI assistants, local chat, and privacy-sensitive applications, this model makes strong sense.

- LFM2-24B-A2B : The flagship text model in the LFM2 series. With 24B total parameters and only 2B active per token, it achieves tool-calling and agentic capabilities on consumer hardware without cloud dependency. This is the Liquid model for serious local agents; the one that may replaces your cloud API calls for all but the most demanding tasks.

Vision-Language Models

- LFM2-VL-450M : Compact multimodal processing at under 500M parameters. Text and image understanding in a package that can run on edge devices. For mobile applications, IoT dashboards, and vision tasks where latency matters, this model delivers.

- LFM2-VL-3B : The larger vision specialist at 3 billion parameters. Edge-optimized but capable of meaningful image understanding, document parsing, and multimodal agent workflows. This is the vision model for applications that need real image comprehension but can’t afford cloud round-trips.

The LFM2.5 Series - Scaled Intelligence

The LFM2.5 series marks Liquid AI’s next evolution, with models pretrained on 28T tokens using a scaled reinforcement learning pipeline. The quality jump is noticeable across the board.

Text Models

- LFM2.5-1.2B-Base : The base model for the 2.5 series. 28T tokens of pretraining gives this 1.2B model a quality floor that punches well above its weight class. For developers who need a reliable base to fine-tune, this is a strong starting point.

- LFM2.5-1.2B-Instruct : The instruction-tuned variant, optimized for agentic tasks and reliable instruction following. If you’re building local assistants, this model delivers the follow-instructions behavior you’d normally need a 7B+ model for.

- LFM2.5-1.2B-Thinking : The reasoning variant enables on-device reasoning under 1GB. Yes, a thinking/reasoning model that fits in less than 1GB of memory. For math-heavy applications where you want visible step-by-step reasoning on embedded hardware, this is a fine achievement.

- LFM2.5-350M : The smallest LFM2.5 model. Liquid AI’s “no size left behind” philosophy means even the smallest model gets the full treatment. This isn’t a neglected also-ran, it’s a first-class citizen in the family.

Vision-Language Models

- LFM2.5-VL-1.6B : Production-ready multimodal agents on any device. 1.6B parameters handling text and images together, built for the kind of edge deployment that other vision models can’t achieve.

- LFM2.5-VL-450M : The compact vision option for the 2.5 series. Structured visual intelligence at the edge, with the same architectural benefits as the rest of the Liquid lineup.

Audio Model

- LFM2.5-Audio-1.5B : End-to-end speech and text generation. 1.5B parameters for low-latency, high-quality conversations. If you’re building voice interfaces that need to work locally, Liquid’s audio model is worth serious attention.

Task-Specific Nano Models

Liquid AI also offers a collection of specialized nano models optimized for specific workloads:

The ColBERT embedding model deserves special mention : Liquid AI’s approach to unified embeddings means you might be able to replace three or four separate embedding models with one. For production systems where embedding quality matters, this is worth benchmarking against separate embedding + retrieval pipelines.

How Liquid Compares

A meaningful comparison against the other models in this guide requires context. Liquid’s architecture is fundamentally different from the Transformer-based models that dominate this article. This isn’t a direct competitor to Qwen3.6-27B or Gemma 4 31B in raw benchmark terms. Instead, Liquid models compete on:

Against Qwen3.5 9B / Llama 3.1 8B : The LFM2-8B-A1B offers comparable quality with MoE efficiency. 1B active params versus 8B dense. For on-device deployment where active parameter count directly maps to latency, Liquid’s architecture advantage is real.

Against Phi-4 Mini Reasoning : The LFM2.5-1.2B-Thinking at under 1GB is the direct competitor to Phi-4 Mini Reasoning for on-device math reasoning. Liquid’s dynamical systems approach may offer advantages in multi-step reasoning coherence.

Against Gemma 4 E4B / E2B : LFM2-VL-450M and LFM2-VL-3B offer comparable vision capabilities with Liquid’s architectural benefits: millisecond latency, true on-device execution, NPU optimization.

The architectural differentiation : Where Transformer models scale poorly beyond their training context length, Liquid Neural Networks handle continuous inputs more naturally. For real-time applications, robotics, time-series analysis, or any task where inputs evolve over time, Liquid’s architecture offers fundamental advantages that benchmark comparisons don’t capture.

The enterprise perspective : Liquid AI’s LEAP platform enables customization and fine-tuning within enterprise firewalls. For organizations that need proprietary models but lack the infrastructure to train from scratch, this is an interesting differentiator.

The benchmark table tells part of the story (LFM2-350M at 43.43% MMLU, LFM2-1.2B at 55.23% MMLU), but Liquid’s real value proposition is architectural : models that adapt in real-time, deploy anywhere, and prioritize latency in ways that Transformer-based models fundamentally cannot. If your use case fits that profile, Liquid Foundation Models are worth serious evaluation.

Where Liquid Falls Short

The marketing around Liquid Foundation Models is compelling, but the full picture includes real limitations that matter depending on your use case:

Benchmark gaps on core tasks : Liquid AI’s own documentation concedes that LFMs currently struggle with zero-shot code tasks, precise numerical calculations, and tasks that require counting (famously, counting the letter ‘r’ in “strawberry”). For coding agents, math-heavy workloads, or anything requiring precise arithmetic, the Transformer-based models in this guide (Qwen, Gemma, DeepSeek-R1) will consistently outperform Liquid at comparable model sizes.

Retrieval-intensive task limitations : The LFM2 technical report explicitly acknowledges that models with linear attention and state-space operators have “limitations in retrieval-intensive tasks.” Tasks like associative recall (looking up a value given a key from earlier in the context) are fundamental weaknesses of RNN-style architectures versus Transformers. If your application involves querying information across long contexts, Liquid’s architecture is at a structural disadvantage.

Weaker instruction following than competitors : The benchmark numbers don’t lie — LFM2-1.2B scores 74.89% on IFEval (instruction following) while Qwen3.5 2B scores higher despite being a similar footprint. On agentic tasks that require reliable tool use, multi-step reasoning, and strict adherence to instructions, Liquid models trail the Transformer-based competition.

Training and optimization complexity : Liquid Neural Networks introduce additional complexity that the broader ecosystem hasn’t fully caught up with. Training LNNs involves Backpropagation Through Time (BPTT), gradient stability concerns (vanishing/exploding gradients in continuous-time dynamics), and ODE solver overhead; especially for the original LTC formulations. While CfC (Closed-form Continuous-time) models address the speed bottleneck, the tooling and operational expertise required is still significantly higher than working with standard Transformer models.

Ecosystem immaturity : The sheer breadth of tooling, quantized variants, fine-tuned derivatives, and community support that exists for Qwen, Llama, and Gemma doesn’t yet exist for Liquid. If you hit a problem, you’re more likely to be in uncharted territory. The “new programming paradigm around working with operators, blocks, and backbones” that Liquid requires is really different; there’s a learning curve that the established model families don’t impose.

Scaling ceiling : While individual Liquid models are parameter-efficient, the architecture faces open questions about scaling to extremely large model sizes. Research notes that “scaling liquid neural networks to very large and high-dimensional state spaces remains open,” and the sequential nature of ODE solving limits parallelization in ways that Transformers don’t face.

Less mature RLHF and preference optimization : Liquid AI notes that “human preference optimization techniques have not been applied extensively to our models yet.” The alignment techniques (RLHF, DPO, constitutional AI) that make Transformer models feel truly helpful and safe are less developed in the Liquid lineup. This shows up in instruction following and general helpfulness benchmarks.

Noise resilience concerns : Standard LNNs may produce overly confident predictions in noisy environments due to a lack of inherent uncertainty mechanisms. Research into uncertainty-aware variants aims to fix this, but it’s a known gap in the current production models.

The bottom line: Liquid Foundation Models excel at edge deployment, latency-sensitive applications, and real-time adaptive tasks. But for general-purpose code generation, math reasoning, and retrieval-heavy workloads (the tasks that dominate most local AI use cases) the Transformer-based models in this article deliver better results out of the box.

The Road Ahead

The local LLM landscape in 2026 is genuinely remarkable. What once required datacenter resources now fits in your workstation; and increasingly, in your laptop bag. The combination of MoE architectures, improved quantization techniques, and hybrid attention mechanisms means the gap between “local” and “cloud” performance is narrower than ever.

Whether you’re running a coding agent, doing research on massive document collections, building a local assistant, or just want AI that respects your privacy, there’s never been a better time to go local.

Your RAM has been waiting for this moment. Time to put it to work.

Models discussed in this article : Qwen3.6-27B, Qwen3.6-35B-A3B, Llama 3.3 70B, Nemotron Super 49B, Gemma 4 31B, Kimi-Linear-48B-A3B, Qwen3-30B-A3B-Thinking-2507, Qwen3-Coder 30B-A3B, Qwen3-VL-32B, Qwen3.5 27B, Gemma 4 26B A4B, DeepSeek-R1 Distill 32B, Mistral Small 24B, Qwen3.5 9B, Llama 3.1 8B, Qwen3.5 9B (16GB), DeepSeek-R1 7B, Qwen2.5 Coder 7B, Phi-4 Mini Reasoning, Gemma 4 E4B, Phi-3.5 Mini, Qwen3.5 2B, Qwen3.5 0.8B, Gemma 4 E2B-it, LFM2-350M, LFM2-700M, LFM2-8B-A1B, LFM2-24B-A2B, LFM2-VL-450M, LFM2-VL-3B, LFM2.5-1.2B-Base, LFM2.5-1.2B-Instruct, LFM2.5-1.2B-Thinking, LFM2.5-350M, LFM2.5-VL-1.6B, LFM2.5-VL-450M, LFM2.5-Audio-1.5B, and Liquid nano models (Extract, Math, RAG, Tool, ColBERT, Transcript, MT).