Autogenesis - When AI Agents Learn to Improve Themselves

(Without Asking Permission)

There is something remarkable about a research paper that proposes to solve a problem by letting AI agents evolve themselves (and manages to do so without once using the phrase “skynet becomes self-aware”). Wentao Zhang’s Autogenesis: A Self-Evolving Agent Protocol does exactly that, threading the needle between ambitious and pragmatic in a way that feels increasingly rare in a field notorious for both.

The timing is not coincidental. As large language model-based agent systems tackle increasingly complex, multi-step tasks (writing and testing code, conducting research, coordinating with other systems), the infrastructure holding these systems together has begun to show cracks. We have built impressive robots, but given them clunky instruction manuals written in a language they were never quite designed to follow.

Autogenesis is Zhang’s attempt to give those robots not just better instructions, but the ability to rewrite their own.

The Cracks in the Foundation

The paper opens with an observation that anyone who has built production agent systems will find familiar: current protocols are... let’s say *aspirational*.

Frameworks like A2A (Agent-to-Agent) and MCP (Model Context Protocol) have become standard scaffolding for agentic AI systems. They define how agents communicate, how tools are invoked, how context is managed. What they conspicuously fail to define, Zhang argues, is how agents should change over time : how they should track versions of themselves, manage the lifecycle of resources (prompts, tools, memory), and safely update without breaking the entire system.

The result, he writes, is that agent compositions tend toward what engineers diplomatically call “monolithic compositions and brittle glue code.” Less diplomatically: the kind of spaghetti that makes future maintainers weep into their keyboards at 2am.

This is a real problem. As agent systems grow more sophisticated (coordinating across multiple entities, maintaining long-horizon context, invoking tools dynamically), the lack of explicit evolution interfaces becomes not just an inconvenience but a fundamental bottleneck. You can build impressive individual agents, but evolution-safe updating remains an afterthought at best.

Zhang’s diagnosis is crisp: existing protocols under specify cross-entity lifecycle and context management, version tracking, and evolution-safe update interfaces.

He’s not wrong. The agent protocols we have are excellent at describing what agents should do, and mediocre at describing how they should grow.

Autogenesis Protocol: Two Layers, One Elegant Idea

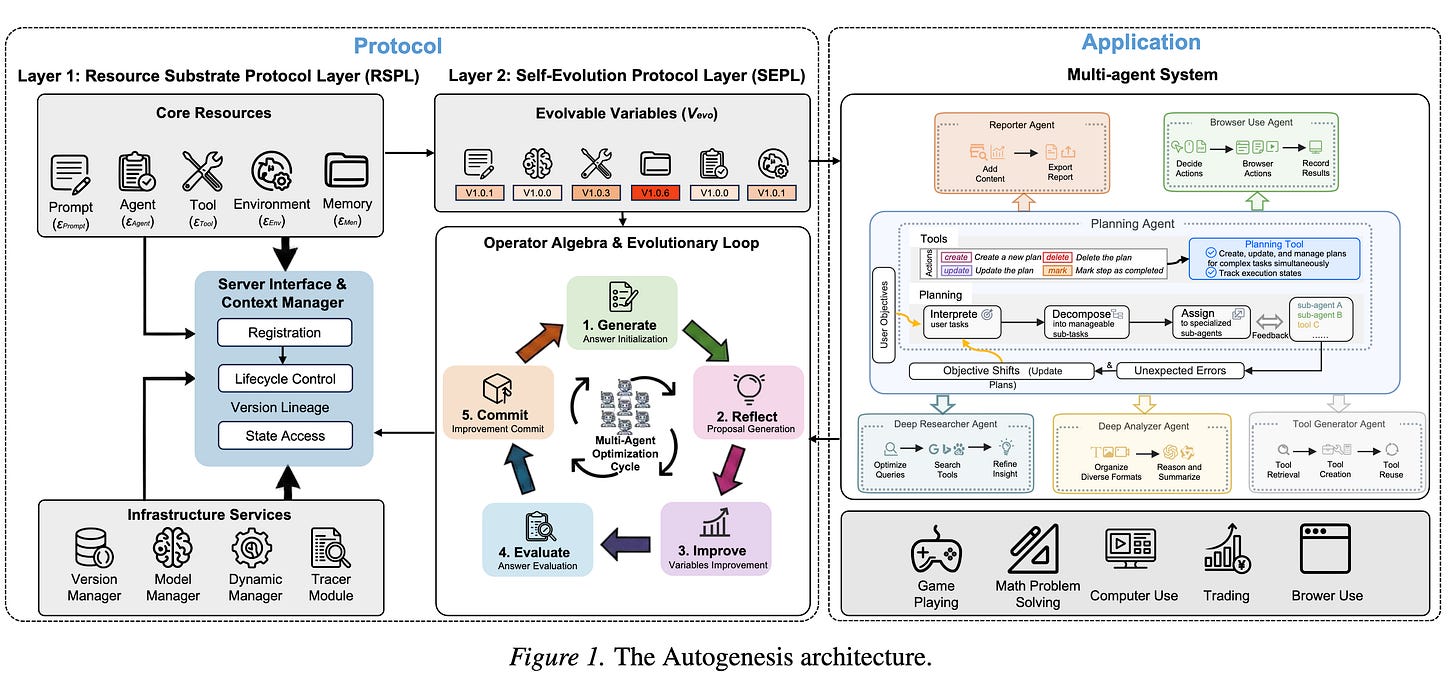

The core contribution is the Autogenesis Protocol (AGP), which rests on a deceptively simple insight: separate the what of evolution from the how.

What evolves? Everything. Prompts, agents, tools, environments, memory. Zhang models all of these as protocol-registered resources with explicit state, lifecycle, and versioned interfaces. Think of it as a kind of taxonomy for the components of an agent system, where each component knows not just what it does but where it came from and how it changes.

How does evolution occur? Through a closed-loop operator interface : propose an improvement, assess whether it actually works, commit if it does, roll back if it doesn’t.

This is the Self Evolution Protocol Layer (SEPL), and it is arguably the more interesting contribution. SEPL specifies an auditable mechanism for agent self-improvement: agents can propose modifications to themselves or other agents, those proposals get evaluated against actual performance metrics, and only verified improvements get committed. Everything is tracked. Everything is revertable. No agent wakes up one morning inexplicably improved itself with no record of how.

The Resource Substrate Protocol Layer (RSPL) handles the state and lifecycle of resources. Each resource ( a prompt, a tool, a memory store), gets a standardized interface that makes it queryable, versionable, and composable. The result is less like a hardcoded agent and more like a well-documented system where components can be swapped, updated, and audited without requiring a full architectural rethink.

This layered approach is... refreshing. In a field that often oscillates between “let’s add another abstraction layer” and “actually, we should remove all abstractions,” Zhang’s two-layer design feels considered. RSPL provides the bones; SEPL provides the nervous system for change. Here’s the illustration from the paper itself :

Autogenesis System: Self-Evolution in Practice

Building on the protocol, Zhang presents the Autogenesis System (AGS) : a self-evolving multi-agent system that dynamically instantiates, retrieves, and refines protocol-registered resources during execution.

In less jargony terms: AGS is a proof of concept that these ideas actually work in practice. Multiple agents coordinate on long-horizon tasks that require planning and tool use across heterogeneous resources. The system can improve its own components mid-execution, with the SEPL layer ensuring that improvements are evaluated before being committed.

The benchmarks used are appropriately demanding: tasks requiring long-horizon planning, multi-step tool invocation, and coordination across heterogeneous resources. AGS shows consistent improvements over strong baselines.

Consistent is the operative word here. The paper is careful not to oversell the results : this is not “our system is 10x better,” but rather “our approach demonstrates measurable improvements on challenging benchmarks, supporting the effectiveness of agent resource management and closed-loop self-evolution.” In a field where every other paper promises transformative gains, the measured tone is almost endearing.

Should I Care?

Let’s be honest about what this paper does and doesn’t do.

What it does well:

First, it identifies a real pain point. Anyone who has built agent systems at scale has encountered the “brittle glue code” problem Zhang describes. The existing protocol landscape has been effective at solving communication but largely punted on the evolution problem. Autogenesis takes that problem seriously.

Second, the two-layer architecture is truly elegant. Decoupling what evolves from how evolution occurs sounds abstract, but it has practical implications for how you build and maintain agent systems. It also provides a natural place for auditing : you can inspect the SEPL layer to understand exactly what changed and why.

Third, the closed-loop evaluation mechanism is the right instinct. Self-evolution without evaluation is just self-modification, and self-modification without checks is how you get systems that optimize for the wrong metric in ways that are hard to detect. By requiring that improvements be assessed before being committed, SEPL provides a natural safety valve.

What it’s less clear about:

The benchmarks, while appropriate, are narrow. The paper demonstrates improvements on specific tasks, but the broader claim (that self-evolving agents will consistently outperform static ones) would benefit from more diverse evaluation scenarios. It’s a start, not a conclusion.

The complexity overhead is real. Implementing RSPL and SEPL adds layers of abstraction that could be burdensome for simpler agent systems. Zhang seems aware of this, positioning Autogenesis as most valuable for complex, long-horizon tasks rather than simple one-shot interactions. But the trade-off between flexibility and simplicity is one that practitioners will have to judge carefully.

The governance question is left implicit. If agents can evolve themselves, who sets the evaluation criteria? Who determines what counts as an “improvement”? The protocol handles how evolution occurs, but the what and why (what the goals should be, who gets to define them) remains outside the scope. This is understandable for a technical paper, but it’s the question that will inevitably arise when this work meets production systems.

Final thoughts

Autogenesis is a genuinely thoughtful piece of work from someone who has clearly built agent systems and felt the pain he describes. It proposes a solution that is architecturally clean, practically motivated, and modestly presented. It does not promise the moon, it does not claim to have solved AGI, and it does not use the word “synergy” even once.

In a field where papers often oscillate between hype and despair, that restraint is itself a kind of achievement.

Whether Autogenesis becomes a foundational layer for the next generation of agent systems, or remains an elegant but underutilized idea, depends on factors the paper cannot control: adoption by framework developers, practical experience with the protocol in production, and the inevitable iteration that comes when rubber meets road.

But for now, it is worth reading : not because it has all the answers, but because it is asking the right questions about a problem that will only become more pressing as agent systems grow more capable.

And in a world where AI systems are increasingly expected to do more, for longer, and with less supervision, figuring out how they should evolve (and how we can make sure they evolve well) seems like a question worth spending time on.

Even if those systems occasionally still manage to be confidently wrong, just with better reasoning.

Implementations

Several github projects have picked up the Autogenesis mantle; some faithfully reimplementing the protocol, others riffing on the same ideas independently.

SkyworkAI/DeepResearchAgent

The official implementation by Wentao Zhang himself. A hierarchical multi-agent research system built directly on the Autogenesis Protocol (RSPL + SEPL), with a top-level planning agent coordinating specialized sub-agents. Resources (prompts, tools, memory) are dynamically instantiated and refined during execution. Includes built-in optimizers (reflection, GRPO, Reinforce++) and benchmark evaluation code for GPQA, AIME, GAIA, and LeetCode. This is the most complete, faithful expression of the paper’s architecture.

EvoAgentX/Awesome-Self-Evolving-Agents

A comprehensive survey repository cataloguing 200+ papers and open-source frameworks in the self-evolving agents space. Not a direct implementation, but an invaluable map of the broader landscape; including frameworks that predate Autogenesis but share its philosophical DNA. Good starting point if you want to understand where Autogenesis sits in relation to the rest of the field.

EvoMap/evolver

A protocol-constrained self-evolution engine built around the Genome Evolution Protocol (GEP). Where Autogenesis separates what evolves from how, GEP evolution into reusable assets called genes and capsules. Similar goals, different protocol design. Includes audit trails, human-in-the-loop review mode, and a structured asset system for governance-conscious evolution.

CharlesQ9/Self-Evolving-Agents

A survey paper and associated repository covering the path to artificial super intelligence through self-evolving agents. References Autogenesis alongside other landmark frameworks (Voyager, Gödel Machine, AlphaEvolve). More of a research map than an implementation, but useful for understanding the broader trajectory the field is moving along.

Wentao Zhang, “Autogenesis: A Self-Evolving Agent Protocol,” arXiv:2604.15034, April 2026.